- Home

- About Us

- Work

- Journal

- Contact

- Car loan calculator

- Gibson thunderbird bass

- Idle champions of the forgotten realms blessings

- Channel 8 news anchor fired

- Marvel ultimate alliance pc max settings

- Need for speed underground 2 walkthrough

- Magix photostory help

- Venture forthe director of nursing

- Serial cloner 2-6-1 free download

- Auto ac compressor repair

- Potassium atom

- Utorrent client

- Jquery cycle through nasa picture of the day

- Culcah candela

- Flamingo pink

- Best linux system monitor remote prettiest

- Nostalgia cotton candy machine

- Vernissage lyrics english

- Green river bible church co

- Nvidia container toolkit

- Hip hop art

- Home

- About Us

- Work

- Journal

- Contact

- Car loan calculator

- Gibson thunderbird bass

- Idle champions of the forgotten realms blessings

- Channel 8 news anchor fired

- Marvel ultimate alliance pc max settings

- Need for speed underground 2 walkthrough

- Magix photostory help

- Venture forthe director of nursing

- Serial cloner 2-6-1 free download

- Auto ac compressor repair

- Potassium atom

- Utorrent client

- Jquery cycle through nasa picture of the day

- Culcah candela

- Flamingo pink

- Best linux system monitor remote prettiest

- Nostalgia cotton candy machine

- Vernissage lyrics english

- Green river bible church co

- Nvidia container toolkit

- Hip hop art

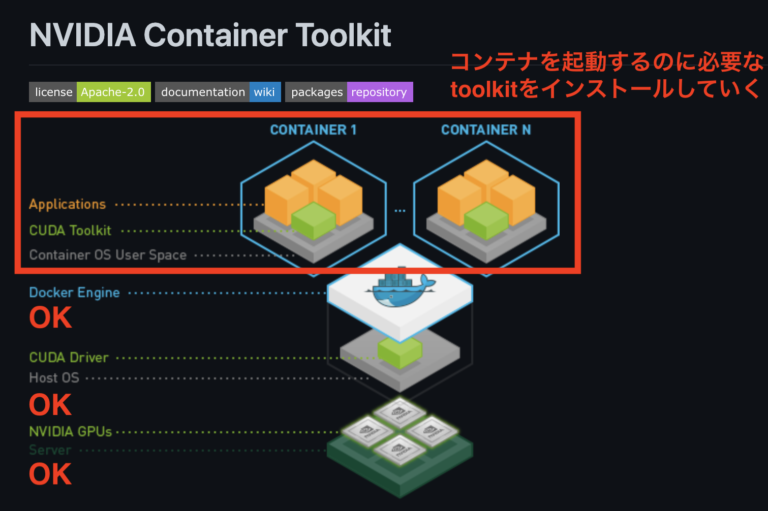

Colocation of several models with their specific dependencies.Isolation of instances of each AI model.In addition, we benefit from the advantages of containerized environments: Thus, the utilization rate of GPU resources is optimized by making them available to multiple application instances. The objective is to set up an architecture that allows the development and deployment of deep learning models in services available via an API. Nvidia-docker addresses the needs of developers who want to add AI functionality to their applications, containerize them and deploy them on servers powered by NVIDIA GPUs. For example, deep learning libraries like TensorFlow and Keras are based on these technologies. There are wrappers for other languages including Java, Python and R.

#Nvidia container toolkit code

CUDA translates application code into an instruction set that GPUs can execute.Ī CUDA SDK and libraries such as cuBLAS (Basic Linear Algebra Subroutines) and cuDNN (Deep Neural Network) have been developed to communicate easily and efficiently with a GPU. NVIDIA CUDA (Compute Unified Device Architecture) is a parallel computing architecture combined with an API for programming GPUs.

#Nvidia container toolkit how to

So, how to use and communicate with GPUs from your applications? The NVIDIA CUDA technology Indeed, they use a specific language (CUDA for NVIDIA) to take advantage of their architecture. However, GPUs cannot execute just any program. Indeed, GPUs are considered as the heart of deep learning because of their massively parallel architecture. This is why the use of GPUs has grown in recent years. In the context of deep learning, where operations are essentially matrix multiplications, GPUs are more efficient than CPUs (Central Processing Units). The latter is part of a larger family of machine learning methods based on artificial neural networks. In the field of artificial intelligence, we have two main subfields that are used: machine learning and deep learning. Finally, we will describe what tools are available to use GPU acceleration in your applications and how to use them. Secondly we will present how to implement the nvidia-docker tool. To understand the usefulness of nvidia-docker, we will start by describing what kind of AI can benefit from GPU acceleration. The main advantage explored here is the use of the host system’s GPU (Graphical Processing Unit) resources to accelerate multiple containerized AI applications.

#Nvidia container toolkit software

This article presents the nvidia-docker tool for integrating AI (Artificial Intelligence) software bricks into a microservice architecture. You would have to change the os-release file back to Zorin once you installed the package.More and more products and services are taking advantage of the modeling and prediction capabilities of AI. But this may cause other issues once the package updates, which should happen pretty quick. You might get it to go through by changing the ID from Zorin to Ubuntu in the /etc/os-release file.

They support only a few Big Name distros, only. This is because Nvidia did not bother to include all distros for this package. Sudo tee /etc/apt//nvidia-container-toolkit.list & curl -s -L $distribution/libnvidia-container.list | \ & curl -fsSL | sudo gpg -dearmor -o /usr/share/keyrings/nvidia-container-toolkit-keyring.gpg \ I just tested on my machine and got it as: distribution=$(.